As we continue to see more and more AI technology in our lives, it’s imperative that we take a moment to think about the ethical implications of Artificial Intelligence (AI). AI products use a completely new design approach that requires even more care — anticipatory design. This predicts and responds to user behavior, without requiring direct input from the user. While this approach increases convenience and relevance, it also reduces user choice. This opens us up to a whole world of moral considerations that designers are best suited to deal with.

Designers think about developing products completely differently than data scientists that put together AI models. A data scientist will look at the massive streams of data available and ask, “What can accurately be predicted using this data?” A designer will look at humanity and ask, “What is the desirable and usable outcome for the people who will use this tool?” As AI moves control away from the users, the second question has never been more vital. Just because AI can do something, doesn’t mean it should; there may be negative outcomes that outweigh the benefits.

Designers have a big role to play in shaping the future of AI from an ethical standpoint. Here are five different hats an AI Designer must wear:

Prevent unfavorable outcomes

AI technologies have far-reaching societal implications including job displacement, social biases, and creating a digital divide. We must involve a diverse set of stakeholders to ensure that AI products align with societal values and promote the collective well-being.

One way to prevent unfavorable outcomes is by participating in an approach called back-casting. This means predicting the negative consequences of using AI and working backwards to prevent them from happening. The designer instead encourages features that will lead to positive outcomes. It’s unlikely that all scenarios will be predicted, but designers should do everything they can to catch threats.

The last major technological advancement that shook up the fabric of society was the dawn of social media. What started as a means for keeping up with friends ultimately produced an epidemic in the productization of oneself. While the aftermath is still being studied, so far, it’s led to increased phone addiction, depression, and lower feelings of self-worth across the globe. While I would like to believe nobody involved in early social media development meant for this to happen, it was a consequence of product teams not thinking through all possible ethical implications of the product.

We must not forget our tendency to take shortcuts and offload information into our tools when possible. For example, I am horrible at directions – to the point where my best chance of navigating correctly is to go against my gut reaction. I blame my reliance on the digital map that has been in my pocket for the entirety of my life. While navigation apps are incredibly useful, in the situation that I lose my phone, I am left worse off than I started.

Designers at the table must take note of what skills robots may take away from people and if we are really comfortable with machines being the sole providers of that skillset. We can aim for a balanced set of knowledge by allowing machines to train humans in a skill while simultaneously performing that skill for us. To put this into context using the navigation example, the app could ask me if I think I should go left or right, before telling me the real answer.

Understanding and showing emotion

The bar for building empathy in AI has risen in recent years. It’s not enough for designers to simply design with empathy; our resulting AI needs to actually show empathy in order to build trust with users. Even though AI is only a tool, when a user sees it truly understands their needs, desires, and values, they will be more willing to use that tool in many areas of their life.

We must also train AI to recognize the inherent value of humans, in order for us to “live” together in harmony. Otherwise, it will make recommendations that are immoral or not in our best interest. AI may become so intelligent and skillful that it doesn’t see the value in including us at all anymore, and may make certain choices without consulting us first.

Even if we build robots to show empathy, they still can’t actually feel empathy the way humans can. After all, there is no real shared experience of life which is the foundation for empathy. Designers need to know when to encourage emotional bonding with AI and when to actively discourage it. For example, it may be good to name a medical assistant robot “Dr. Joe” to facilitate personality, trust and comfort to patients. However, a robot made to detect bombs should not be given personality or emotions to avoid the controller becoming too attached to let it risk its “life.”

Encouraging collaboration and symbiosis

The importance of conversation cannot be overstated when designing for people from all walks of life. I can run through problems and solutions in my head all day long, but the sounding board will be limited to my own experiences, ideas, and conclusions. Conversation forces us to filter out biases and address conflict that we would not encounter with ourselves. This makes it a useful design tool.

AI can help break down these personal echo chambers by facilitating conversations between humans and machines via interfaces, but only if it is intentionally designed to act that way. This facilitation is the responsibility of the designer. We should not think of AI as just a tool that gives us an answer once we ask it a question — that’s boring! We want an organic, conversational interface that introduces possibilities we haven’t yet thought of. We want the ability to be proven right, proven wrong, and proven unsure. We want AI to not only answer our questions, but to present us with questions in return - to reveal to us our own blind spots.

An AI output should always be considered a work in progress to be improved upon – the results can always be better.

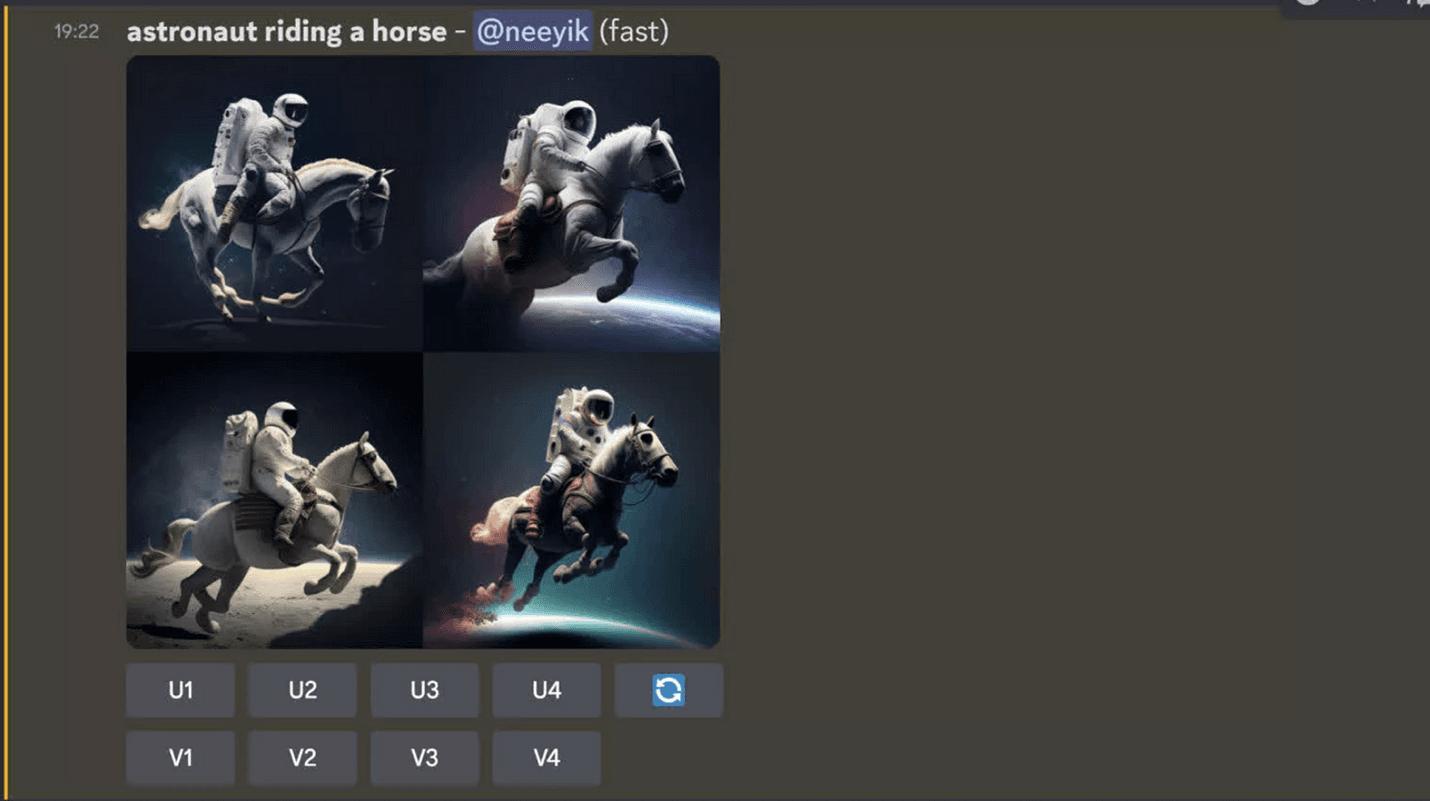

In the AI program, Midjourney, users are shown a variety of images based on their request and presented with options like upscaling to create a higher-quality version or generating similar versions. The AI responds to and depends on the user’s input in order to create something the user could never have crafted themselves.

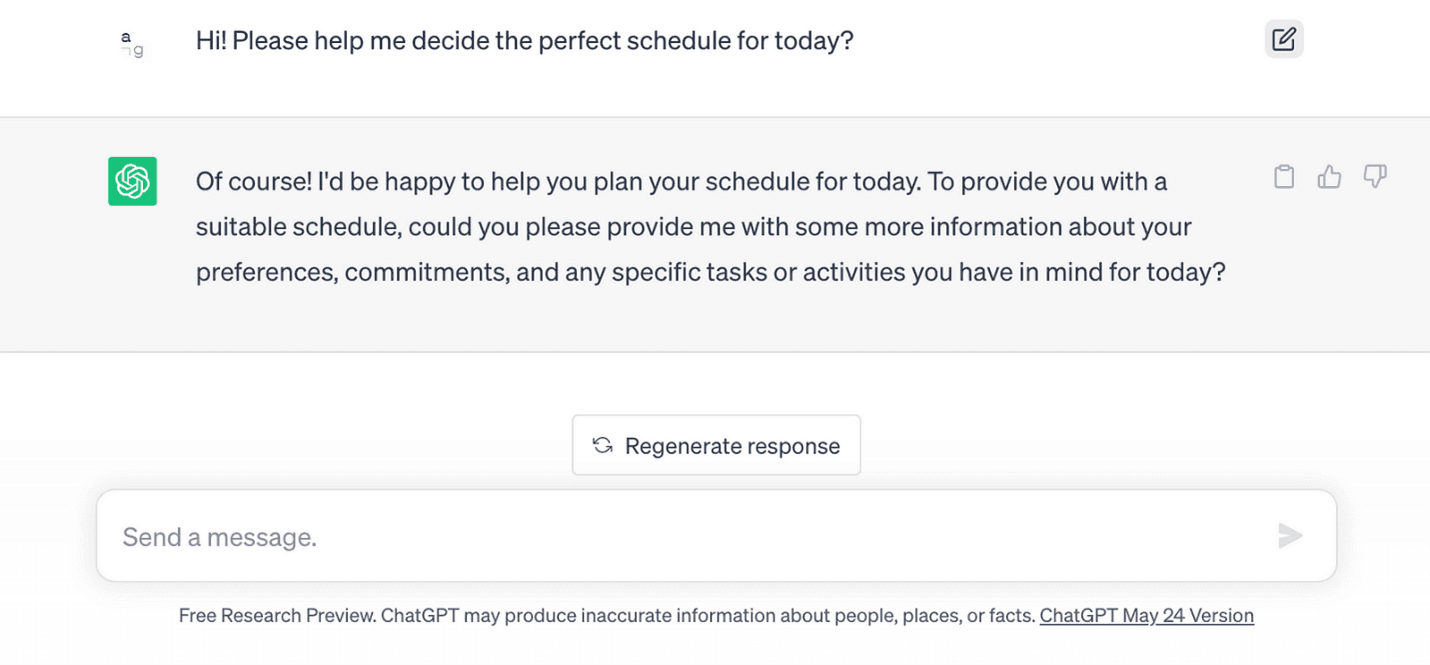

The AI chatbot famously known as Chat GPT does something similar with its text responses. The user asks the AI a specific question and the AI generates a text reply. Depending on how happy the user is with the reply, they can edit the original question, generate a new response, or ask another question. The AI retains context of the situation which makes it feel like a real conversation. You can even ask the bot to act as a type of person (e.g. designer, engineer, therapist) to provide more realistic or specific insight.

As a general rule, conversational AI bots should be:

Ensure Transparency

In an ideal world, AI should be able to describe itself in detail. Users should have a clear understanding of how the system operates; what data it use; how it makes decisions; and its capabilities, limitations, and bias in order to use the tool to make smart decisions.

One criticism of the machine learning that powers AI is that it can be seen as a “black box” — a system that produces results without a clear explanation of how those results were arrived at. However, there are various approaches and techniques within the field that can be used to explain, interpret, and even visualize the results produced by these algorithms. For instance, we can use feature importance scores to identify the most significant variables that are driving the results. We can also use techniques like partial dependence plots or SHAP (SHAPley Additive exPlanations) values to visualize how changes in specific variables are affecting the model’s predictions. By adopting these techniques, we can gain a deeper understanding of how AI tools make decisions and improve our ability to interpret and trust the results produced by these powerful algorithms.

No AI model is perfect (hence the “learning” part of “machine learning”), so users should approach an AI product with a healthy degree of skepticism. While this is a different approach than one taken with traditional applications, the power of AI has risen the stakes. Designers now have the ability to affect what users perceive as reality. In the realm of spatial computing (i.e. involving our senses — think virtual reality), designers are essentially creating human perception itself. We take information in with our senses, our brain processes it, and the output is what we understand as reality. It is vital that AI is either accurate to reality or honest with how it isn’t - otherwise, it shouldn’t exist.

Empower people of all kinds

In case of an emergency, robots should always have a manual override no matter how good they are. As advocates for the user, designers must always put people first. This means allowing the user to retain as much power over the robot as possible.

One way to do this is to offer options to overrule AI suggestions or recommendations and give users a way to provide feedback on AI decisions. Designers can use feedback to refine algorithms, reduce biases, and improve system performance. By working together in an iterative process, AI systems can better align with user needs and preferences, making for a better user experience.

With so many checks and balances required by designers and engineers, what exactly should AI be trusted with? A good rule of thumb is that machines should make decisionsthat involve logical analysis to eliminate options based on predefined criteria. They should not replace human decision makingin scenarios that require an inherent wisdom of right and wrong, o

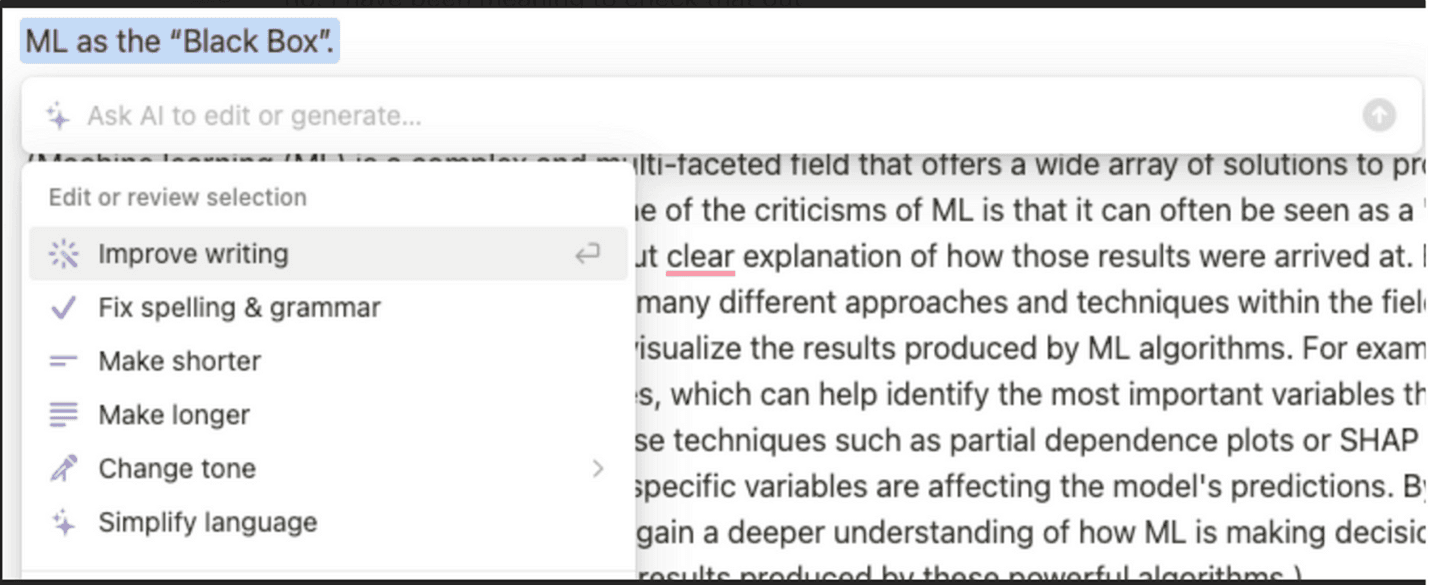

Notion AI is a great example of design that keeps the user in control while the AI serves its functional purpose of being a text editor and writing tool. It can improve content, fix spelling and grammar errors, make text shorter or longer, and even change the writing tone to be more friendly or serious. However, the user still calls the shots with the flexibility to discard or modify any suggestions it provides.

AI systems are only as unbiased as the data they are trained on. Designers should ensure that datasets are diverse, inclusive, and representative of the target user population. They can do this by incorporating data from different demographic groups, considering various cultural perspectives, and making sure underrepresented or marginalized groups are substantially represented in the dataset.

Designers should employ rigorous evaluation techniques to identify and assess potential biases in AI systems. This involves analyzing the impact of the AI system across different demographic groups and identifying disparate outcomes or unequal treatment. By understanding these biases, designers can work towards addressing them.

As AI takes off, designers will play a vital role in combating ethical concerns by anticipating risks, designing for empathy, driving conversation, promoting transparency, and empowering people. Designers will need to wear all five of these hats to shape a future where AI and humanity coexist harmoniously.

Now, go design in peace and put on those hats!